In today’s indie game scene, creativity and passion are your superpowers—but the right tools are…

The Tiny Synth That Accidentally Became a Full Music Workstation

Why I Built It

I built this because I kept running into the same problem while working on game prototypes: I needed audio that fit the project, but I did not want to stop what I was doing, open a DAW, set everything up, and switch into a completely different workflow just to make a few sounds.

Stock libraries are fine for placeholders, but they usually sound generic or mismatched. Custom audio is expensive when you are still figuring things out. A full music-production workflow makes sense when you are ready for it, but it is too heavy for the earlier stage where you are still experimenting with mechanics, mood, and pacing.

So the original goal was simple: make it easy to open a browser tab, build a sound quickly, and stay in the same creative headspace.

How It Started

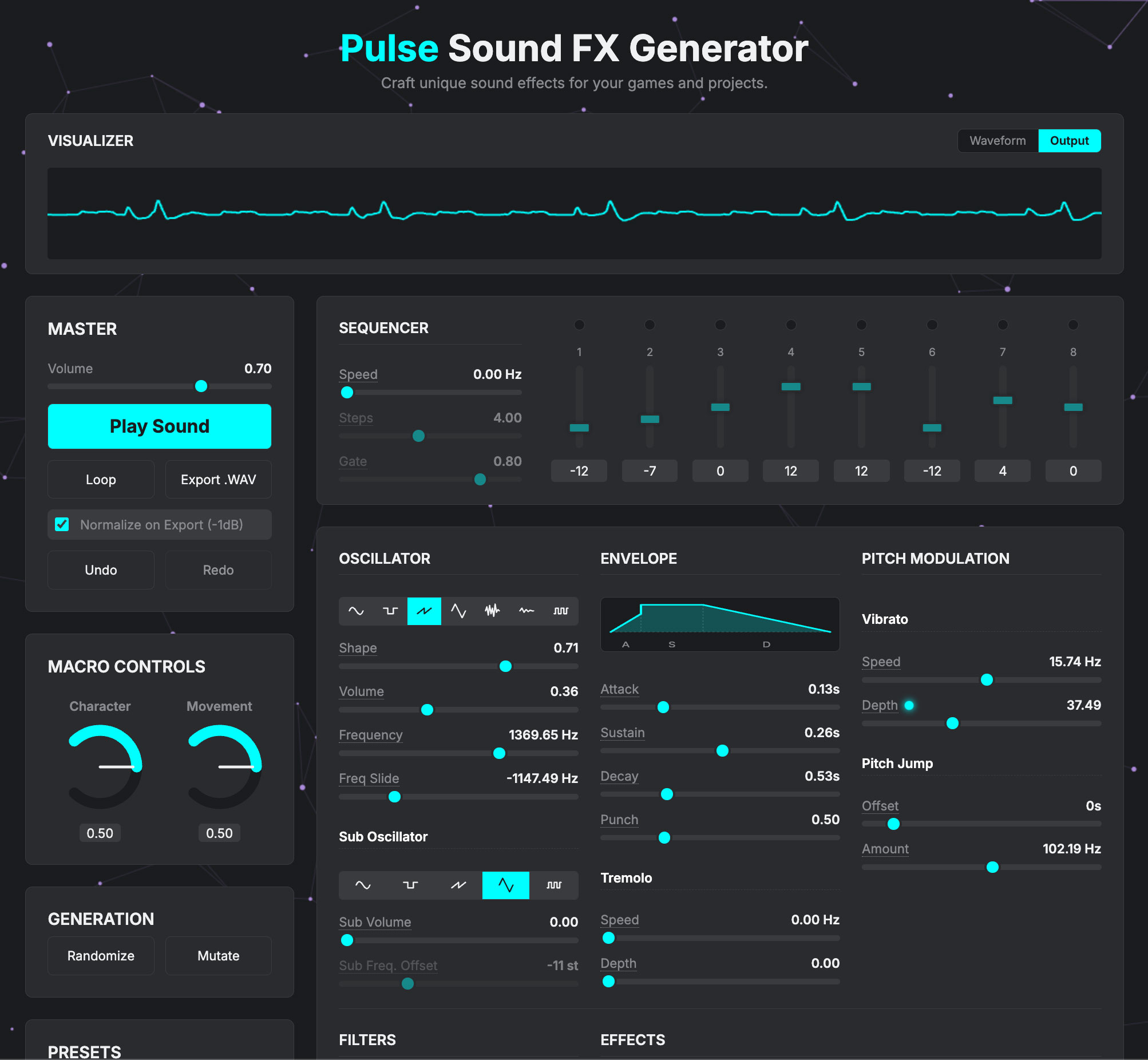

The first version was Pulse Sound FX Generator. It was basically a small browser synth for making sound effects and rough game audio ideas.

That alone was useful. I could make UI sounds, blips, impacts, sweeps, simple tonal effects, and other small pieces of audio that actually felt like they belonged to the project I was working on. They were not polished final assets, but they were specific, and that mattered more than polish at that stage.

The problem was that once I had that, I immediately wanted more. I wanted to save sounds, make variations, play notes instead of just one-off effects, and sketch musical ideas without leaving the browser. That is how the tool started expanding.

What It Turned Into

The scope creep was predictable. I kept adding things because I kept needing them.

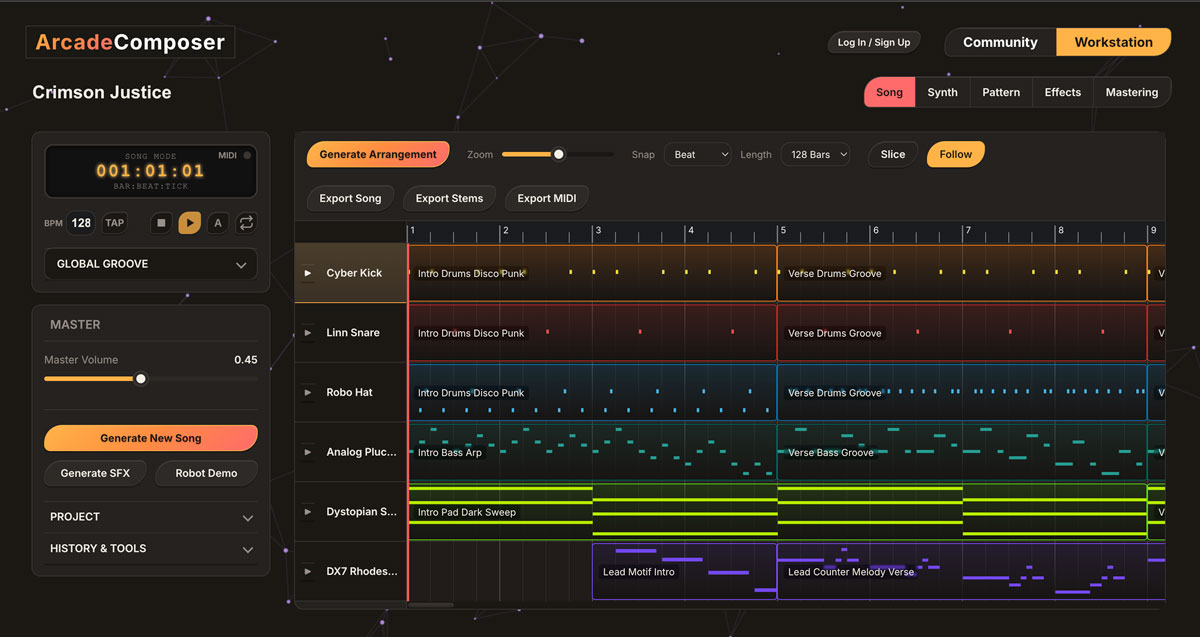

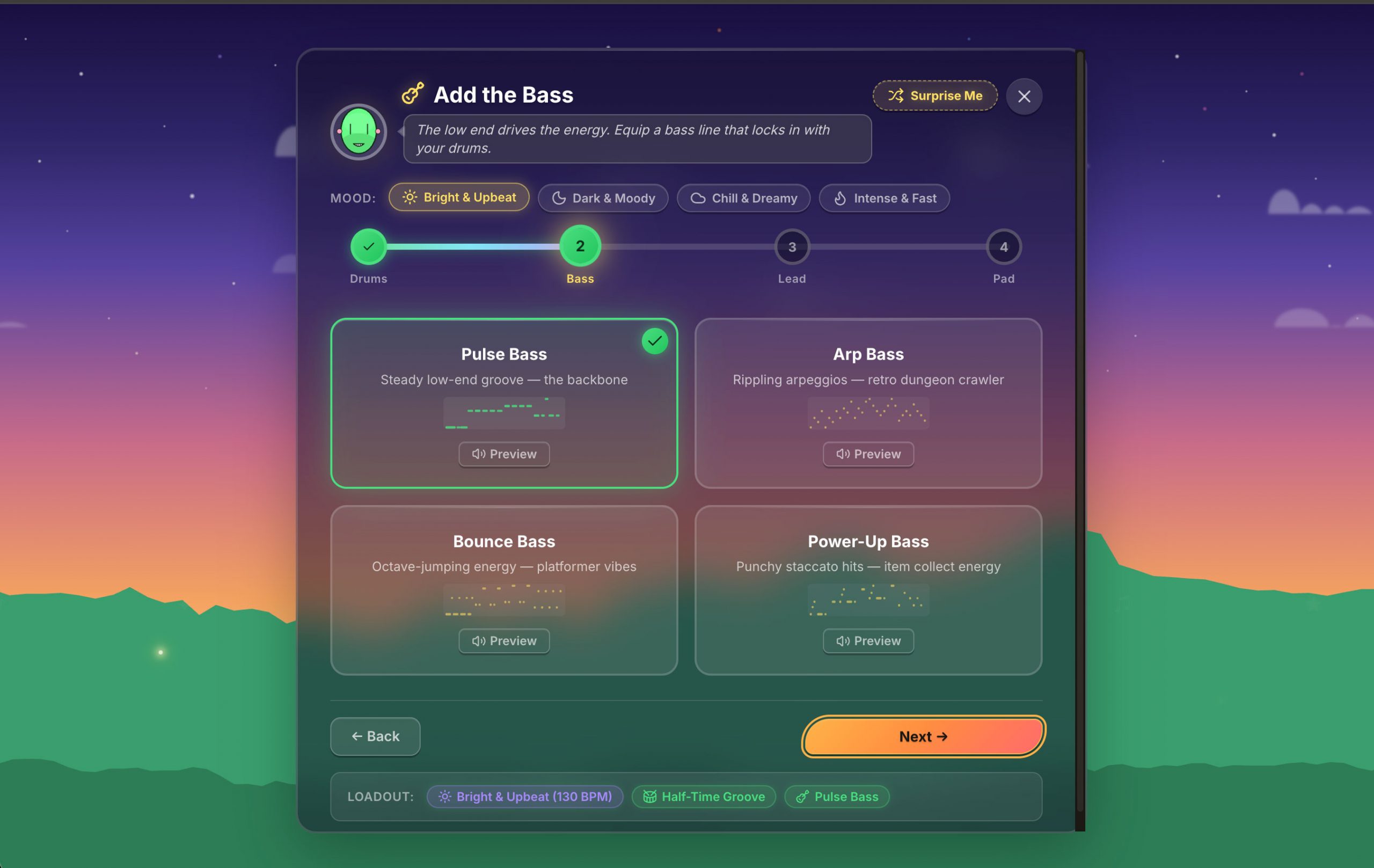

First it became a more capable synth: more oscillators, more modulation, more shaping tools, more ways to build sounds that felt substantial enough to use musically instead of just as effects. Then it became clear that if it could already make usable patches, it also needed basic composition tools. So I added polyphony, pattern editing, an arrangement view, automation, and MIDI support.

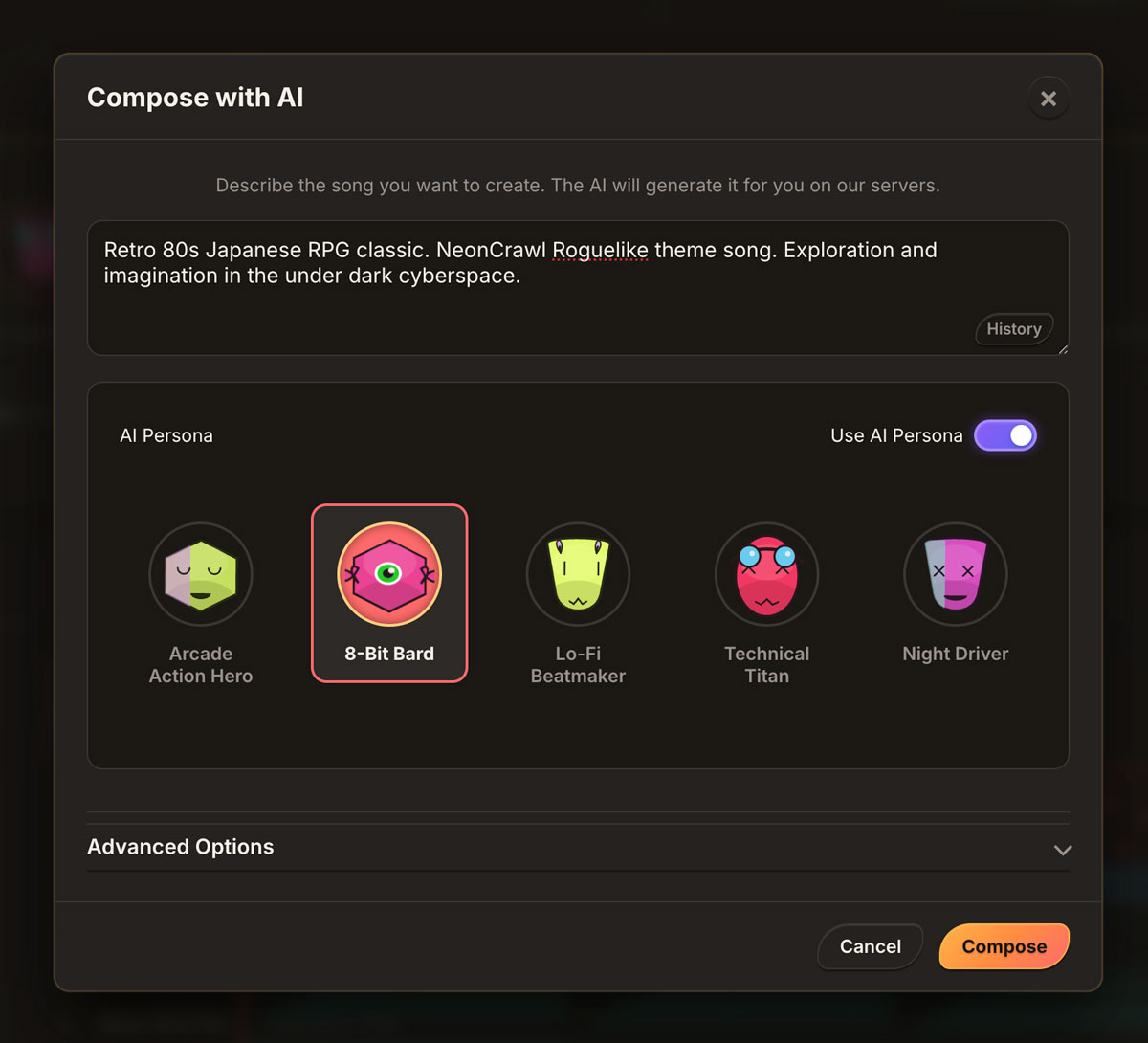

Later I added AI composition, not because I wanted fully automated music, but because sometimes the hardest part is getting started. A rough generated idea is often enough to react to, edit, and push somewhere more specific.

At that point it was no longer a tiny sound-effect tool. It had turned into a full browser-based workstation, which became Arcade Composer.

What Stayed the Same

Even though the feature set got much bigger, the underlying goal did not really change. I still wanted the same thing I wanted at the start: a fast way to get from an idea to usable game audio without breaking momentum.

That goal shaped most of the design decisions. I was not trying to clone a DAW in the browser for the sake of it. I was trying to build something that made sense next to a game prototype. That meant low friction, quick iteration, and tools that felt useful in the context of game development rather than in the context of traditional studio workflow.

That is also why I care so much about immediacy. If something slows me down, breaks playback, or makes the tool feel awkward during real work, I notice it right away because I am also the person using it for actual projects.

The Most Annoying Technical Problem

One of the worst recurring problems in the project was stale closures in React.

The playback engine runs through a Web Worker, and the worker sends timing and playback messages back to the main thread. The problem was that React callbacks could end up holding onto outdated state, which meant playback logic would sometimes read old instrument data or an old version of the project. That kind of bug is especially nasty because everything is only slightly wrong, which makes it harder to trace.

The fix was to stop relying on captured values in the live playback path and move that data access to refs. Straightforward in hindsight, but it took longer than it should have because the bug kept showing up in slightly different ways before I made the pattern consistent across the project.

That was a good reminder that building real-time audio tools on the web is often less about the glamorous big ideas and more about solving ugly timing and state problems until the tool becomes dependable.

How It Changed My Thinking

The bigger change for me was not just that I built a music tool. It is that I started thinking about audio differently.

Before this, I mostly treated music like a more traditional asset pipeline: write the track, export it, bring it into the game, move on. That still works, and I am not against it. But building this pushed me toward thinking of audio as part of the system design instead of just something added later.

That matters more in projects with procedural or modular structures. If everything else in the game is being recombined, adapted, and iterated on, it makes sense to want the audio workflow to be more flexible too. Not fully automated, and not disconnected from taste or authorship, but more adaptable and easier to shape in context.

That shift probably changed my workflow more than anything else. It made audio feel less like a final deliverable and more like something I could test, adjust, and develop alongside the rest of the game.

Why I Kept Building It

There is not really a grand lesson here. I just kept using the tool, so I kept improving it.

That is usually the best signal you get with small internal tools. Most experiments do not go very far. Some are just temporary utilities. But every now and then, one keeps earning its place because it solves a real problem better than the more proper or standard option.

That is what happened here. I did not set out to build a full workstation. I built something small that was useful, then kept extending it because the next missing feature kept becoming obvious.

Where It Is Now

That original browser experiment is now Arcade Composer.

For me, the important part is not the feature list by itself. It is that the tool still does the same thing it did in the beginning: it helps me stay close to the project while I am working. I can sketch sounds, build loops, shape tracks, test mood quickly, and keep moving instead of breaking flow every time I need audio.

That was the whole reason I started building it, and it is still the reason I use it.

This Post Has 0 Comments