In today’s indie game scene, creativity and passion are your superpowers—but the right tools are…

The Tiny Synth That Accidentally Became a Full Music Workstation

When I started building my recent game prototypes, I was not trying to make a music app. I was trying to solve a very ordinary indie-dev problem: I needed audio that actually fit the game, and I needed it fast.

One project, Neon Crawl, lives in that cyberpunk, synthetic, procedural space where the sound should feel electric, mechanical, and a little unstable. The other, Veil of Flesh and Stars, leans into mutation, dread, and cosmic weirdness. Both of them needed audio that felt specific to their worlds rather than something generic I dragged in just to fill silence. That turned out to be harder than it sounds.

If you have worked on an indie game for any length of time, you have probably run into the same issue. Stock sound libraries are fine for placeholders, but they usually sound like they belong to somebody else’s project. Commissioning custom audio gets expensive quickly, especially when you are still experimenting and don’t even fully know what the project wants yet. Full DAWs are obviously powerful, but they can also pull you completely out of the mindset you were in. You go from prototype mode to music-production mode, and by the time you have opened the project, loaded what you need, routed everything correctly, and remembered what you were trying to do, the original spark is gone.

I didn’t want that kind of friction. I wanted something that let me stay close to the game and close to the feeling I was trying to catch while it was still fresh.

It Started as a Way to Make Better Sound Effects

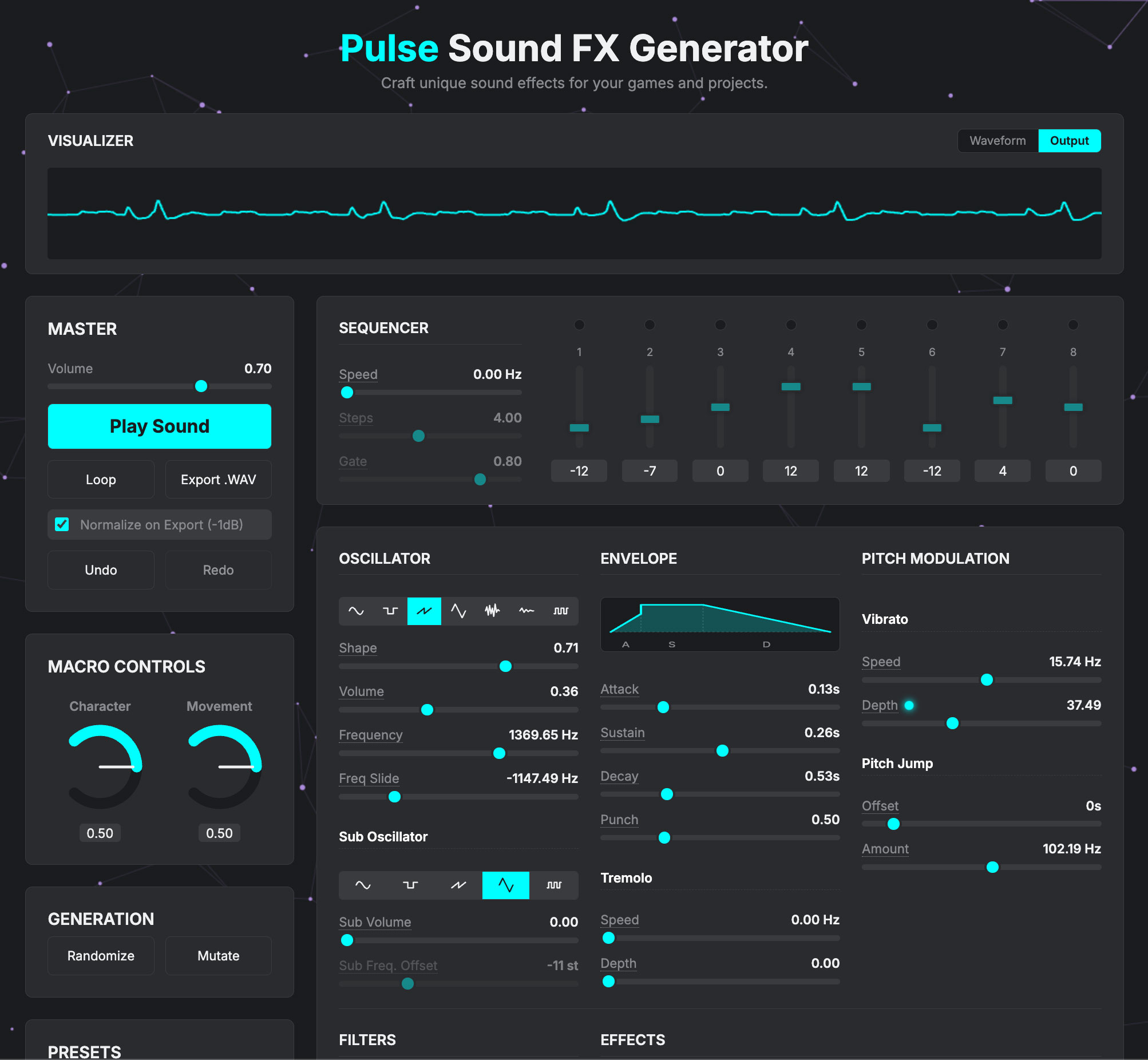

The first version was just me experimenting with the Web Audio API in the browser. I called it Pulse Sound FX Generator, and the goal was very simple: open a tab, twist a few knobs, make something usable, and move on. No install, no giant project, no setup ritual, no detour into technical nonsense when all I really needed was a sound.

Even that early version turned out to be more useful than I expected. Suddenly I could make UI pings, rough weapon sounds, little sci-fi blips, tonal sweeps, mechanical noises, and other tiny pieces of audio that actually felt connected to the project I was building. They were not polished masterpieces, and they were not meant to be. They were just sounds that felt like they belonged to my game instead of sounding like leftovers from somebody else’s library.

That alone made it worth building, but it also revealed the next problem almost immediately. Once I could make sounds quickly, I wanted to save the good ones. Then I wanted variations. Then I wanted to play notes with them. Then I wanted patterns. Then I wanted a way to sketch musical ideas without leaving the browser. That was the point where the project quietly stopped being a small utility and started turning into something larger.

The Scope Creep Was Completely Predictable

I added a second oscillator because the sounds felt too thin. Then I added a sub oscillator because the low end needed more weight. Then I added noise because I wanted hats, grit, and texture without reaching for samples. Then more modulation, because once you start wanting movement, one static sound is never enough.

At a certain point I looked up and realized I was no longer building a tiny browser synth for sound effects. I had built a real synth engine. It had three oscillators, multiple envelopes, dual LFOs, filters, voice modes, distortion, bitcrushing, wavefolding, ring modulation, and all the usual signs that you are no longer “just adding one more thing.” The project had crossed the line, and I had crossed it with it.

One of the moments where that really clicked for me was when I was tweaking a patch that turned into what I would describe as a dirty arcade-acid lead. It was mono, saw-based, aggressive in the right places, and had enough bite to feel like the beginning of an actual musical idea rather than a sound-design placeholder. Once I heard that, the obvious question was why I was still pretending this tool was only for effects.

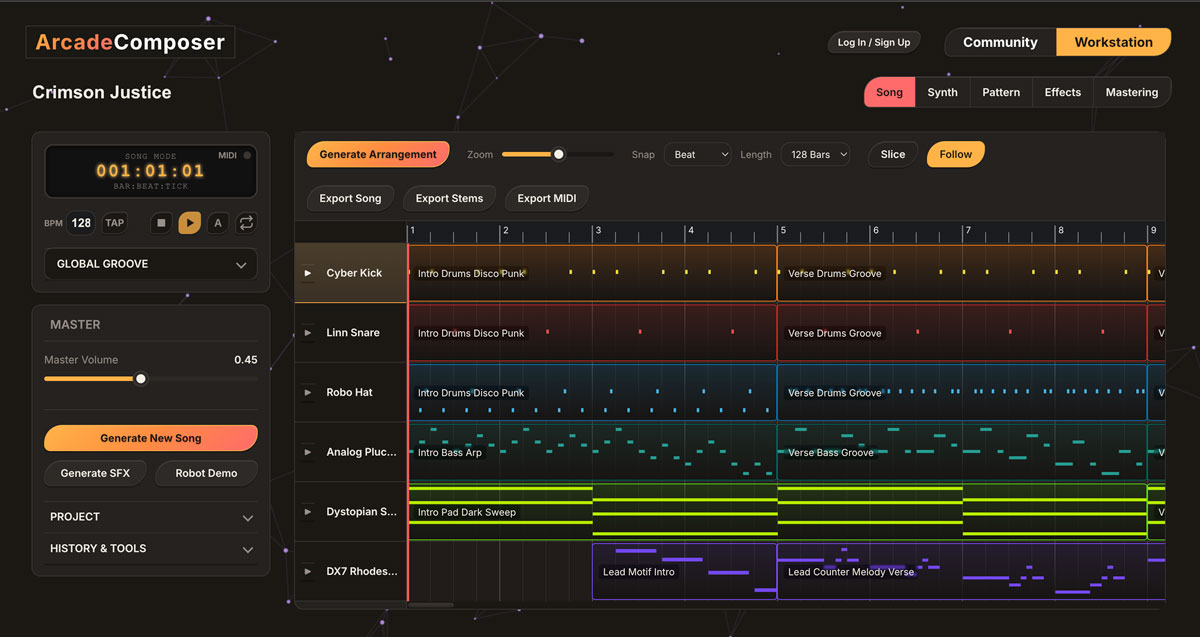

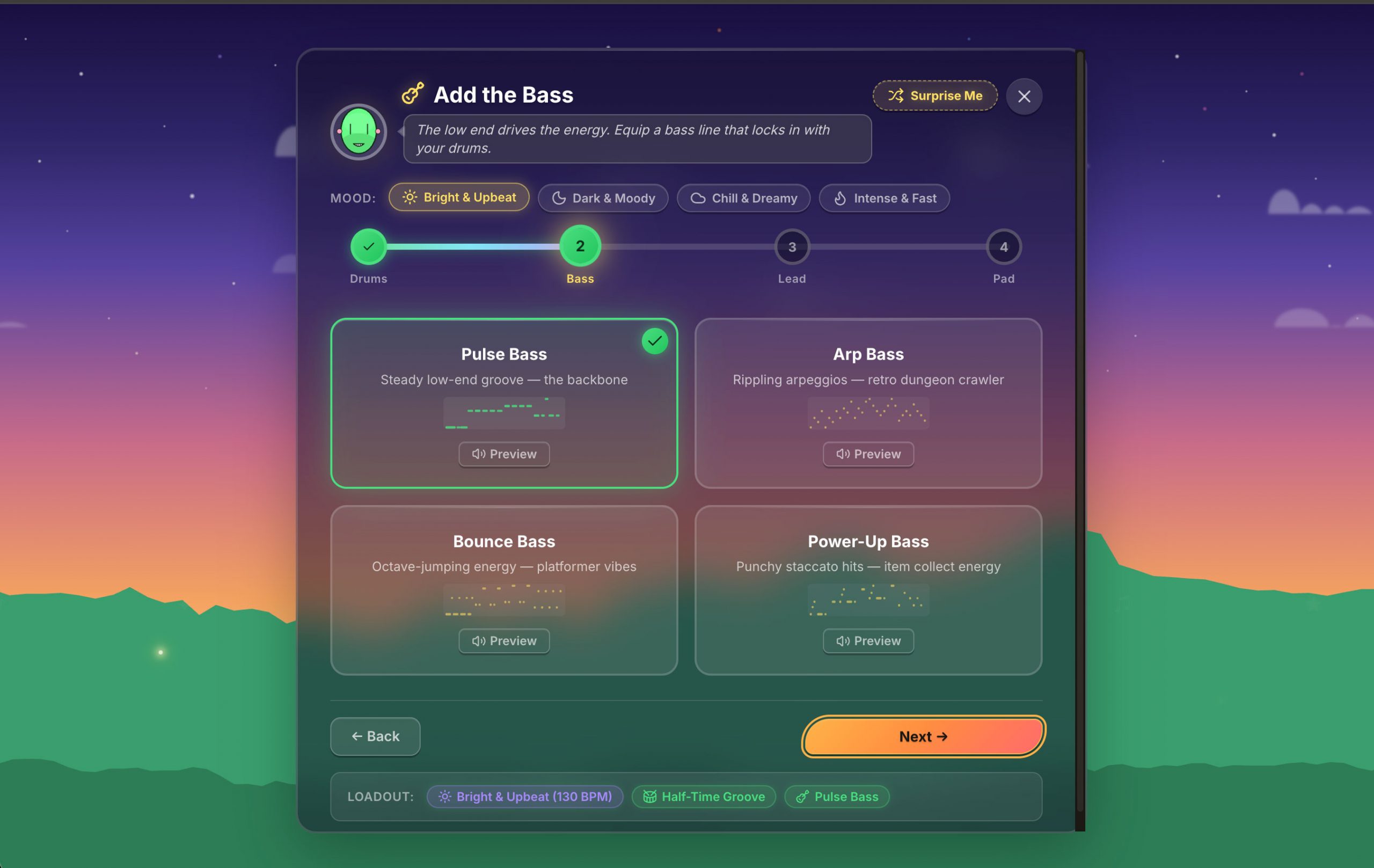

From there the next additions were almost inevitable. I added polyphony because one-note sound design kept nudging toward chords. I added a piano roll because once I could sequence a riff, I could test the emotional feel of a scene in seconds instead of guessing. I added playback directions because looping behavior matters more than people think, especially in game-like music where repetition is structural. I added an arrangement view because four bars only gets you so far before you need pacing, transitions, and shape. I added automation because I didn’t just want sounds; I wanted sounds that evolved. I added MIDI input because I had a keyboard sitting on my desk and there was no good reason not to use it. I added MIDI learn because once control becomes tactile, it is hard to go back.

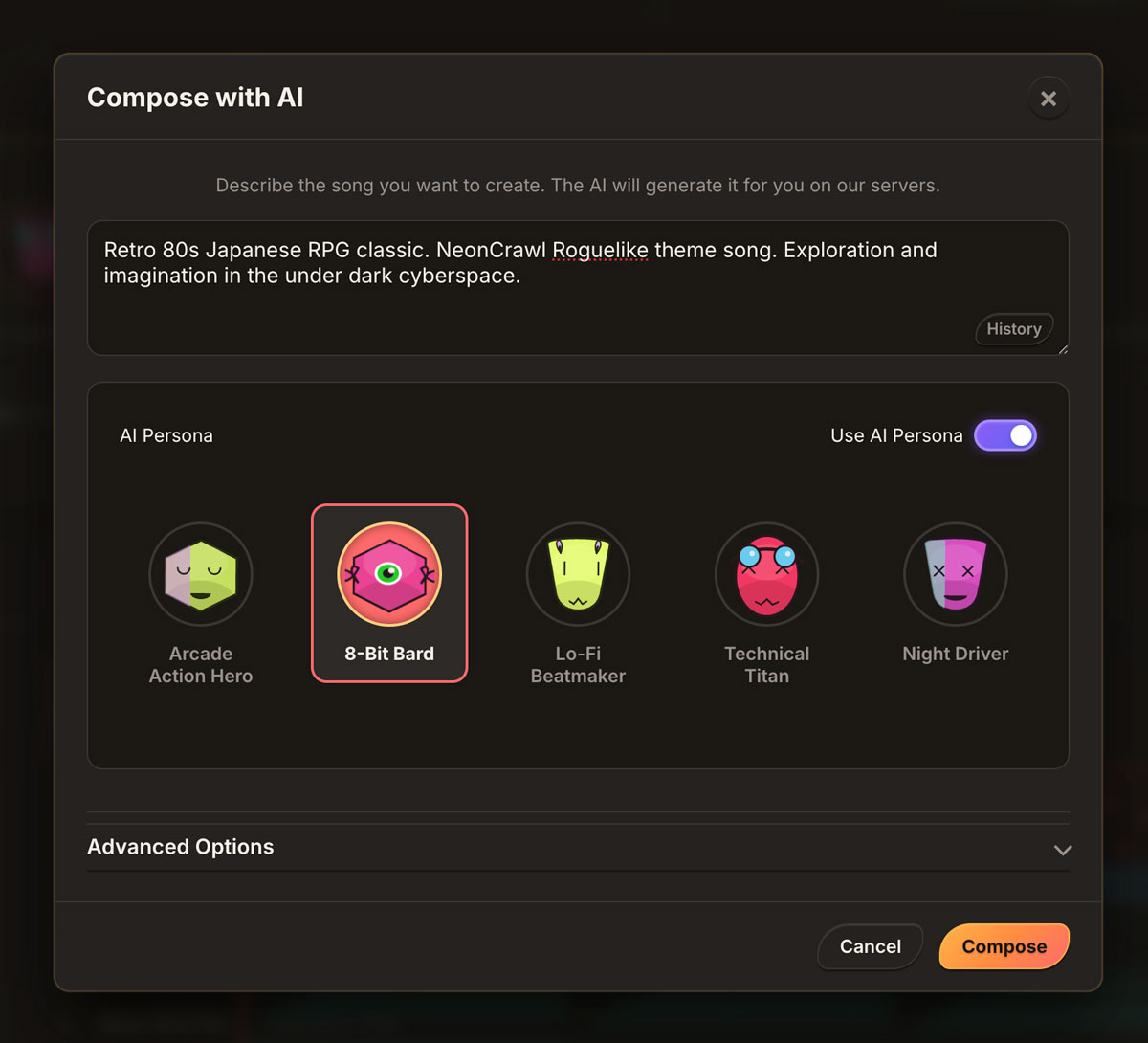

Eventually I added AI composition too, not because I wanted the machine to do the creative work for me, but because sometimes the hardest part is simply getting started. Sometimes you know you want something eerie, synthetic, tense, or fast, but you need something to react to before your own taste can really engage. A rough first draft, even a flawed one, can be more useful than a blank screen.

At some point I had to stop pretending this was still a tiny synth experiment. It had become a full music workstation, and that project eventually turned into Arcade Composer.

The Original Goal Never Changed

What surprised me is that even as the project got dramatically bigger, the core goal barely changed at all. The real question underneath the whole thing was always the same: can I get from idea to usable game audio without killing momentum?

That question shaped almost everything. I was not trying to build a browser clone of a professional DAW, and I was not trying to rack up a checklist of expected features. I was trying to build something that made sense in the context where I actually work: in a browser tab, next to a prototype, while I am still thinking like a game developer rather than switching into some completely separate production pipeline.

That is why immediacy mattered so much. It is why I cared about being able to open it and just start. It is why I wanted presets to feel more like moods, playable scenes, and useful starting points than sterile categories. It is also why so many decisions came directly from friction I personally ran into while using it. When playback timing lived in the wrong place, I heard it. When the UI and audio engine got in each other’s way, I felt it immediately. When something interrupted flow, it was obvious because I was the one trying to use the thing in the middle of real work.

That ended up being one of the best design filters I had. If the tool annoyed me while I was actually making something, it needed to change.

The Part Nobody Warns You About: Stale Closures

One of the most recurring and obnoxious bugs in this project came from stale closures in React. It is the kind of issue that sounds boring until it quietly burns through an entire weekend and leaves you wondering whether you are losing your mind.

The playback engine lives in a Web Worker, and the worker posts messages back to the main thread to trigger notes, update automation, and keep the whole system in sync. That part was fine in theory. The real problem was that React callbacks are very good at holding on to old state unless you are careful, which meant I would sometimes have code running against the wrong instrument data, the wrong render, or some outdated version of the project that no longer reflected reality.

That kind of bug is especially nasty because the result is often not catastrophically broken. Everything is just slightly wrong. You hit play and something feels off, but not in a way that immediately points to the cause. Those are the bugs that make you doubt your ears, your code, and your sanity in rotation.

The eventual fix was straightforward once I fully committed to it: stop trusting captured values in the live playback path and move to refs for the data that actually needed to stay current. The hard part was that this was one of those problems I “fixed” several times before I actually fixed it. It kept resurfacing in slightly different forms until I established a better pattern across the project instead of treating each instance like an isolated glitch.

That experience was a good reminder that real-time creative tools on the web are often hardest in the least glamorous places. The big ideas are fun. The real pain usually lives in the ugly timing bugs, the state mismatches, and the weird little collisions between audio, rendering, interactivity, and UI.

It Changed How I Think About Game Audio

Before this project, I mostly thought about game music in a more traditional way: compose a track, export the file, bring it into the game, and call it done. There is nothing wrong with that workflow. Plenty of great games work exactly that way, and I still think it makes sense in a lot of cases.

What changed for me was not that I suddenly rejected that pipeline, but that I became more interested in sound as part of the system design instead of only as a finished asset. That shift mattered especially because I was already building games with procedural and modular thinking at their core. In something like Neon Crawl, where systems are already mutating, recombining, and generating variation, it started to feel strange that the music should be the one thing locked in place from the start.

That does not mean I want everything to be fully automated, and it does not mean I want to hand authorship over to a button. What interested me was a different kind of workflow: generate a strong starting point, mutate it, swap the palette, shift the feel, adapt it to a biome, faction, mechanic, or encounter, and keep human taste in the loop the entire time. That creates a very different feedback cycle.

If I can make a drone, darken the filter, stretch the rhythm, change the texture, and hear the result against gameplay almost immediately, then sound stops being a late-stage asset pass and becomes part of how I think through design. That was probably the biggest change this whole project created for me. It didn’t just give me a tool; it changed the relationship between music and prototyping.

If You’re an Indie Dev, You’ve Probably Seen This Happen

I don’t think there’s some big moral here. It’s just something I’ve noticed over and over in indie development: the small, messy tools you build for yourself sometimes end up mattering more than you expected. A rough script, a browser experiment, a temporary editor utility, some little internal thing you made to get past a bottleneck—those projects can stick around because they solve a real problem better than the “proper” solution ever did.

A lot of them go nowhere, obviously. Some are just clutter. But every now and then, one keeps surviving every cleanup pass because you actually use it. Then you improve it a little. Then it solves the next problem too. Then eventually you realize it stopped being a side experiment a long time ago.

That’s basically what happened here. What started as a small synth for making better game sounds gradually turned into the tool I keep open next to my prototypes when I need to turn a mood or mechanic into something audible without losing momentum. That’s really the whole story. Not feature count, not scope, and not some grand transformation narrative. It just kept being useful, so I kept building it.

Where It Is Now

That browser experiment is now Arcade Composer. Somewhere along the way it stopped being a little sound-effect toy and became the thing I reach for when I want to sketch a sound, sequence an idea, build a loop into a track, or get past creative paralysis with a generated starting point that I can then actually shape into something specific.

What matters to me is not really the list of features, even though the feature list is obviously much larger now than it was at the beginning. What matters is that the tool still solves the same problem it solved in its earliest form. It keeps me moving. It keeps me close to the game, close to the mood, and close to the original creative impulse instead of forcing me into a completely different workflow every time I need audio.

That was the point when I started building it, and it is still the point now.

The Real Lesson

The main thing I keep coming back to is that tools are not neutral. The thing you build for the game can start shaping the game back. It can influence the pacing, the mood, the texture of the experience, and even the way you think while prototyping.

This browser-synth detour changed the way I think about audio, procedural systems, and creative workflow in general. More than that, it reminded me that the project you think is a side tool is sometimes the thing quietly reshaping everything else you are making. If you have built some janky little helper app and you keep reaching for it, that is worth paying attention to. It may not be a side note at all. It may be the real thing.

This Post Has 0 Comments